The Argument for Connectivity Agnosticism

It’s about push vs. pull… but it shouldn’t be.

There has been a lot of heated debate on whether pushing telemetry data from systems or pulling that data from systems is better. If you’re just hearing about this argument now, bless you. One would think that this debate is as ridiculous as vim vs. emacs or tabs vs. spaces, but it turns out there is a bit of meat on this bone. The problem is that the proposition is wrong. I hope that here I can reframe the discussion productively to help turn the corner and walk a path where people get back to more productive things.

At Circonus, we’ve always been of the mindset that both push and pull should have their moments to shine. We accept both, but honestly, we are duped into this push vs. pull dialogue all too often. As I’ll explain, the choices we are shown aren’t the only options.

The idea behind pushing metrics is that the “system” in question (be it a machine or a service) should emit telemetry data to an “upstream” entity. The idea of pull is that some “upstream” entity should actively query systems for telemetry data. I am careful not to use the word“centralized” because in most large-scale modern monitoring systems, all of these bits (push or pull) are decentralized rather considerably. Let’s look through both sides of the argument (I’ve done the courtesy of striking through the claims that are patently false):

Push has some arguments:

Pull doesn’t scale well- I don’t know where my data will be coming from.

- Push works behind complex network setups.

- When events transpire, I should push; pulling doesn’t match my cadence.

Push is more secure.

Pull has some arguments:

- I know better when a machine or service goes bad because I control the polling interval.

- Controlling the polling interval allows me to investigate issues faster and more effectively.

Pull is more secure.

To address the strikethroughs in verse: Pulling data from 2 million machines isn’t a difficult job. Do you have more than 2 million machines? Pull scales fine… Google does it. When pulling data from a secure place to the cloud or pushing data from a secure place to the cloud, you are moving some data across the same boundary and are thus exposed to the same security risks involved. It is worth mentioning that in a setup where data is pulled, the target machine need not be able to even route to the Internet at all, thus making the attack surface more slippery. I personally find that argument to be weak and believe that if the right security policies are put in place, both methods can be considered equally “securable.” It’s also worth mentioning that many of those making claims about security concerns have wide open policies about pushing information beyond the boundaries of their digital enclave and should spend some serious time reflecting on that.

Now to address the remaining issues.

Push: I don’t know where my data will be coming from.

Yes, it’s true that you don’t always know where your data is coming from. A perfect example is web clients. They show up to load a page or call an API, and then could potentially disappear for good. You don’t own that resource and, more importantly, don’t pay an operational or capital expenditure on acquiring or running it. So, I sympathize that we don’t always know which systems will be submitting telemetry information to us. On the flip side, those machines or services that you know about and pay for — it’s just flat-out lazy to not know what they are. In the case of short-lived resources, it is imperative that you know when it is doing work and when it is gone for good. Considering this, it would stand to reason that the resource being monitored must initiate this. This is an argument for push… at least on layer 3. Woah! What? Why I am talking about OSI layers? I’ll get to that.

Push: Works behind complex network setups.

It turns out that pull actually works behind some complex network configurations where push fails, though these are quite rare in practice. Still, it also turns out that TCP sessions are bidirectional, so once you’ve conquered setup you’ve solved this issue. So this argument (and the rare counterargument) are layer 3 arguments that struggle to find any relevance at layer 7.

Push: When events transpire, I should push; pulling doesn’t match my cadence.

Finally, some real meat. I’ve talk about this many times in the past, and it is 100% true that some things you want to observe fall well into the push realm and others into the pull realm. When an event transpires, you likely want to get that information upstream as quickly as possible, so push makes good sense. And as this is information… we’re talking layer 7. If you instrument processes starting and stopping, you likely don’t want to missing something. On the other hand, the way to not miss disk space usage monitoring on a system is to log every block allocation and deallocation — sounds like a bit of overkill perhaps? This is a good example of where pulling that information at an operator’s discretion (say every few seconds or every minute) would suffice. Basically, sometimes it makes good sense to push on layer 7, sometimes it makes better sense to pull.

Pull: I know better when a machine or service goes bad because I control the polling interval.

This, to me, comes down to the responsible party. Is each of your million machines (or 10) responsible for detecting failure (in the form of absenteeism) or is that the responsibility of the monitoring system? That was rhetorical, of course. The monitoring system is responsible, full stop. Yet detecting failure of systems by tracking the absenteeism of data in the push model requires elaborate models on acceptable delinquency in emissions. When the monitoring system pulls data, it controls the interval and can determine unavailability in a way that is reliable, simple, and, perhaps most importantly, simple to reason about. While there are elements of layer 3 here if the client is not currently “connected” to the monitoring system, this issue is almost entirely addressed on layer 7.

Pull: Controlling the polling interval allows me to investigate issues faster and more effectively.

For metrics in many systems, taking a measurement every 100ms is overkill. I have thousands of metrics available on a machine, and most of them are very expressive on observation intervals as large as five minutes. However, there are times at which a tighter observation interval is warranted. This is an argument of control, and it is a good argument. The claim that an operator should be able to dynamically control the interval at which measurements are taken is a completely legitimate claim and expectation to have. This argument and its solution live in layer 7.

Enter Pully McPushface.

Pully McPushface is just a name to get attention: attention to something that can potentially make people cease their asinine pull vs. push arguments. It is simply the acknowledgement that one can push or pull at layer 3 (the direction in which one establishes a TCP session) and also push (send) or pull (request/response) on layer 7, independent of one another. To be clear, this approach has been possible since TCP hit the scene in 1982… so why haven’t monitoring systems leveraged it?

At Circonus, we’ve recently revamped our stack to allow for this freedom in almost every level of our architecture. Since the beginning, we’ve supported both push and pull protocols (like collectd, statsd, json over HTTP, NRPE, etc.), and we’ll continue to do so. The problem was that these all (as do the pundits) conflate layer 3 and layer 7 “initiation” in their design. (The collectd agent connects out via TCP to push data, and a monitor connects into NRPE to pull data.) We’re changing the dialogue.

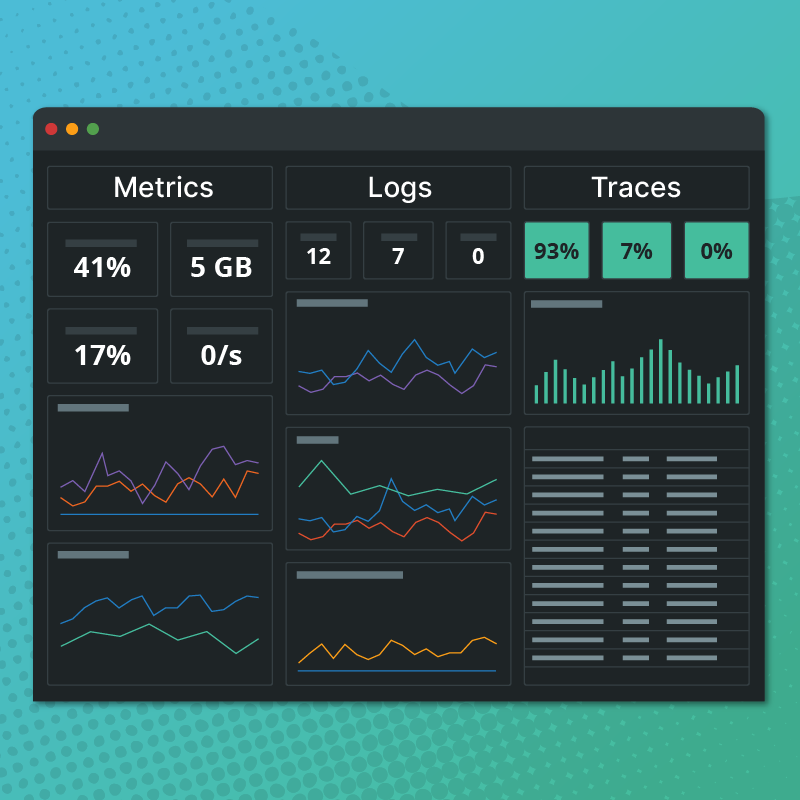

Our collection system is designed to be distributed. We have our first tier: the core system, our second tier: the broker network, and our third tier: agents. While we support a multitude of agents (including the aforementioned statsd, collectd, etc.), we also have our own open source agent called NAD.

When we initially designed Circonus, we did extensive research with world-leading security teams to understand whether our layer 3 connections between tier 1 and tier 2 should be initiated by the broker to the core or vice verse. The consensus (unanimous I might add) was that security would be improved by controlling a single inbound TCP connection to the broker, and the broker could be operated without a default route, disabling it from easily sending data to malicious parties were it duped into sending data. It turns out that our audience wholeheartedly disagreed with this expert opinion. The solution? Be agnostic. Today, the conversations between tier 1 and tier 2 care not as to who initiates the connection. Prefer the broker reaches out? That’s just fine. Want the core to connect to the broker? That’ll work too.

In our recent release of C:OSI (and NAD), we’ve applied the same agnosticism to connectivity between tier 2 and tier 3. Here is where the magic happens. The nad agent now has the ability to both dial in and be dialed to on layer 3, while maintaining all of its normal layer 7 flexibility. Basically, however your network and systems are setup, we can work with that and still get on-demand, high-frequency data out; no more compromises. Say hello to Pully McPushface.