Telemetry data – the metrics and measurement data emitted by all machines and devices from servers to robots – is exploding. Telemetry data generation is growing so fast, there are no reliable estimates of just what that growth rate looks like. Theo Schlossnagle, founder of Circonus and widely respected computer scientist, estimates that telemetry data is growing at the rate of 1×1012 every ten years. Suffice to say, that’s a lot. And all of that data creates many challenges for the enterprise – how to collect, transfer, ingest, store, manage, and make sense of it efficiently.

When I worked in data centers in the 1980s, disc storage, compute, and I/O devices all sat on the same raised floor, so moving data was not so difficult. Data transfer was limited only by the size of the cables you were running between devices. But with the advent of cloud computing and the complex nature of distributed systems, data transfer got a lot more difficult.

To address this issue, Amazon literally rolled out (on a semi-trailer truck at re:Invent in 2016) the “snowmobile” – a 45-foot long ruggedized shipping container capable of storing up to 100PB of data. The idea being you load your data on a snowmobile and then it is physically driven to AWS where it is uploaded to Amazon S3. Data transfer rates will vary with highway speed limits I suppose.

But the challenges of managing telemetry data are only going to multiply with 5G networks, edge applications, and the rapidly expanding Internet of Things. Gartner reports that by 2022, 50% of enterprise-generated data will be created and processed outside of a traditional data center or cloud, up from less than 10% in 2019, and that number could reach 75% by 2025. In other words, it’s being generated at the edge.

As telemetry data accumulates quickly at the edge, it becomes infeasible to collect and send all of it to a centralized platform for monitoring and analysis due to its overwhelming mass – a phenomenon often referred to as “data gravity,” (a term coined by Dave McCrory in a 2010 blog post). The answer to date has been to store data in the cloud and move your queries and analytics there. But that’s no longer possible given telemetry data volumes and real-time processing requirements. You end up making compromises in which telemetry to collect, how much and how often, which leads to poor visibility, longer problem resolution times, and stale data in analytics and queries.

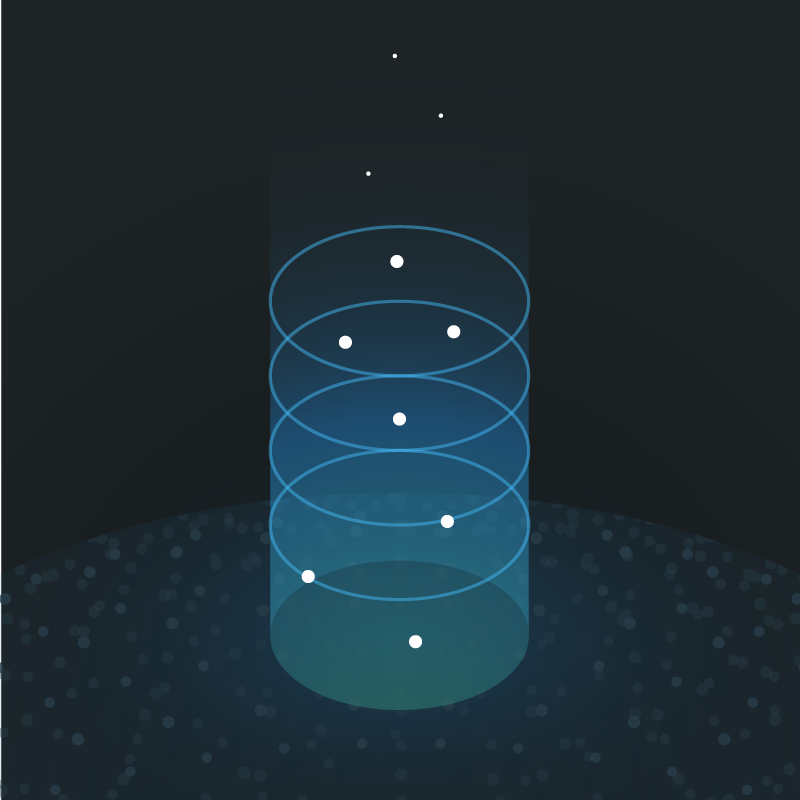

Circonus Zero-Gravity Data™ is a set of innovative and patented technologies and techniques that act together to neutralize the impact of data gravity – giving your data the effect of being weightless and eliminating the inherent challenges and limitations associated with massive data sets. With Zero-Gravity Data, the entire Circonus platform, from ingestion to analytics to storage, has been optimized for the best possible performance in dealing with massive data volumes – imperative for monitoring today’s modern architectures and applications at scale.

Here’s how:

Broker Technology. Compact and efficient, our brokers enable data collection in real-time and at scale from any data source from data centers, to cloud, to edge processors, and even down to the smallest IoT sensors and devices.

Edge Processing. Where operational requirements dictate, we move processes such as analytics and queries to the data instead of trying to move data to the processes. Circonus fault and anomaly detection can be run at the edge for true real-time streaming analytics. With 5G networks and applications at the edge, there is simply not enough time to transfer data to the cloud in order to support operational analytics.

Patented Data Compression Technology. When we do need to move data, Circonus uses a patented log linear histogram technique to radically compress the size of that data. Many benefits accrue from this compression including lightning-fast collection, transmission, and ingestion of data, fractional storage requirements which enables virtually limitless long-term data retention, and economics that dramatically reduce the amount of hardware and compute resources required to process the data (by up to 75%).

Gravity-Aware Queries. Once data is ingested from our brokers it is stored in Circonus IRONdb (a highly performant time series database) where data is optimized for the best possible query performance. In a multi-node IRONdb cluster, innovative “gravity aware” technology moves queries to the node where the needed data resides so that no additional movement of the data is required.

Swim Lanes. For ultimate performance, customers can choose to run Circonus IRONdb in their own “swim lane” which offers dedicated compute and memory resources. Swim lanes can be hosted by Circonus as SaaS or run and managed privately by the customer wherever they choose.

Numerous Circonus customers are already benefiting from zero-gravity data:

Xandr, an Adtech DSP platform, is ingesting data at the rate of 250 million measurements per minute. That is data so rich, it’s used to help identify fraud on their platform.

HBO is able to save entire seasons of infrastructure performance (used to deliver its HBO Max streaming service) so that it can conduct season over season historical analytics.

Major League Baseball (MLB) is able to consume all metrics from its statistics API instead of only samples – billions of measurements – giving what Riley Berton, MLB’s Principal Site Reliability Engineer, calls “delicious data” for its analysts and engineers.

A major service provider in the oil and gas industry, as part of a proof-of-concept exercise, used Circonus to ingest high frequency measurements off wellheads in an oil field in order to help optimize its fracking operations.

Data gravity is a challenge that is only going to grow as the volume of telemetry being generated continues to increase – especially at the edge. Circonus Zero-Gravity Data is one more in a series of industry innovations that eliminates the barriers between your data and your ability to harness the valuable insights it contains.

Would you like to try Circonus? Sign up for a free 60 day trial.